The End of General Support for VMware NSX Data Center for vSphere (NSX-v) was January 16, 2022. More details can be found here: https://kb.vmware.com/s/article/85706

If you are still running NSX-v, please start planning your migration to NSX-T as soon as possible as this may be a complex and time consuming job.

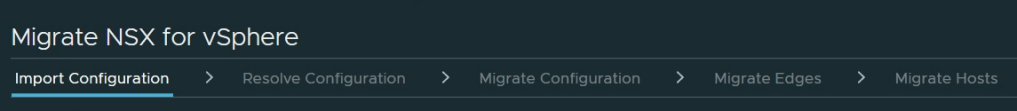

I have done several NSX-v to NSX-T migrations lately and thought I should share some of my experiences, starting with my latest End-to-End Migration using Migration Coordinator. This was a VMware Validated Design implementation containing a Management Workload Domain and one VI Workload Domain. I will not go into all the details involved, but rather focus on the challenges we faced and how we resolved them.

Challenge 1

The first error we got in Migration Coordinator was this:

Config translation failed [Reason: TOPOLOGY failed with ”NoneType’ object has no attribute ‘get”]

After some investigation we tried to reboot the NSX-V Manager appliance, but this did not seem to resolve it. We then noticed that EAM status was in a Starting state on NSX Manager, but never changed to Up. We tried to figure out why, and after a while we found that the “Universal Synchronization Service” was stopped so we started it manually, and this made the EAM status change to Up. Not sure how this is related really, but we never saw the error again.

Challenge 2

The next error we got in Migration Coordinator:

Config migration failed [Reason: HTTP Error: 400: Active list is empty in the UplinkHostSwitchProfile. for url: http://localhost:7440/nsxapi/api/v1/host-switch-profiles%5D

We went through the logs trying to figure out what was causing this, but never found any clues. We ended up going through every Portgroup on the DVS and found one unused Portgroup with no Active Uplinks. We deleted this Portgroup since it was not in use and this resolved the problem. If the Portgroup had been in use, we could have added an Uplink to it.

Challenge 3

Migration Coordinator failed to remove NSX-v VIBs from the ESXi host. At first we didn’t figure out why, but we tried to manually remove the VIBs using these commands:

esxcli software vib get -n esx-nsxv

Showed that the VIB was installed.

esxcli software vib remove -n esx-nsxv

Failed with “No VIB matching VIB search specification ‘esx-nsxv'”

After rebooting the host, the above command was successfully removing the NSX-v VIBs.

Challenge 4

NSX-T VIBs fail to install/upgrade, due to insufficient space in bootbank on ESXi host.

This was a common problem a few years back, but I hadn’t seen this in a while.

The following KB has more details and helped us figure out what to do: https://kb.vmware.com/s/article/74864

After removing many unused VIBs we were able to make enough room to install NSX-T. When we did this in advance on other hosts, we also got rid of Challenge 3.

Challenge 5

During the “Migrate NSX Data Center for vSphere Hosts” step we noticed the following in the doc:

“You must disable IPFIX and reboot the ESXi hosts before migrating them.”

We had already disabled IPFIX, but we hadn’t rebooted the hosts, so we decided to do that, however this caused all VMs to lose network connectivity. NSX-v is running in CDO mode, so I am not sure why this happened, but probably got to do with the fact that the control plane is down at this point in the process. We had a maintenance window scheduled so the customer didn’t care, but next time I would make sure to do this in advance.

Challenge 6

The customer were using LAG and when checking the Host Transport Nodes in NSX-T Manager, they all had PNIC/Bond Status Degraded. Since we had migrated all PNICs and VMKs to N-VDS, the hosts still had a VDS connected which had no PNICs attached. Removing the VDS solved this problem.

Challenge 7

Since we were migrating from NSX-v to NSX-T in a Management Cluster, we would end up migrating NSX-T Manager from a VDS to an N-VDS.

The NSX-T Data Center Installation Guide has the following guidance as well as details on how to configure this:

“In a single cluster configuration, management components are hosted on an N-VDS switch as VMs. The N-VDS port to which the management component connects to by default is initialized as a blocked port due to security considerations. If there is a power failure requiring all the four hosts to reboot, the management VM port will be initialized in a blocked state. To avoid circular dependencies, it is recommended to create a port on N-VDS in the unblocked state. An unblocked port ensures that when the cluster is rebooted, the NSX-T management component can communicate with N-VDS to resume normal function.”

Hopefully this post will help you avoid some of these challenges when migrating from NSX-v to NSX-T.