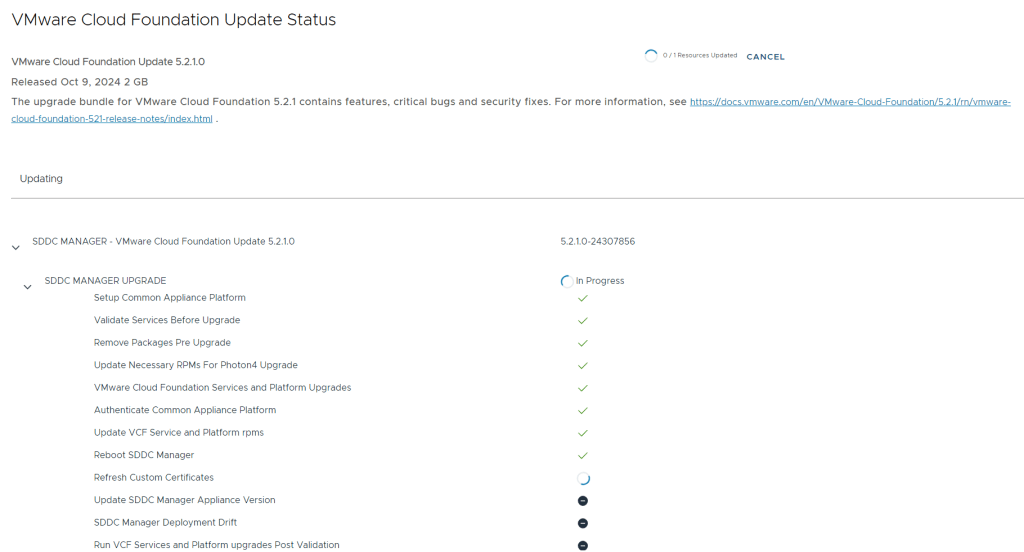

I recently upgraded a customer from VMware Cloud Foundation (VCF) 5.0.0.1 to 5.1. The upgrade went well in the end, but I had some issues along the way that I would like to share in this quick post.

The first issue I ran into was that I was unable to select 5.1 as target version and an error message saying “not interopable: ESX_HOST 8.0.2-22380479 -> SDDC_MANAGER 5.0.0.1-22485660“. I found VMware KB95286 which resolved this problem.

After the SDDC Manager was upgraded to 5.1, I got the following error message when going to the Updates tab for my Management WLD :

Retrieving update patches bundles failed. Unable to retrieve aggregated LCM bundles: Encountered error requesting http://127.0.0.1/v1/upgrades api - Encountered error requesting http://127.0.0.1/v1/upgrades api: 500 - "{\"errorCode\":\"VCF_ERROR_INTERNAL_SERVER_ERROR\",\"arguments\":[],\"message\":\"A problem has occurred on the server. Please retry or contact the service provider and provide the reference token.\",\"causes\":[{\"type\":\"com.vmware.evo.sddc.lcm.model.error.LcmException\"},{\"type\":\"java.lang.IllegalArgumentException\",\"message\":\"No enum constant com.vmware.evo.sddc.lcm.model.bundle.BundleSoftwareType.MULTI_SITE_SERVICE\"}],\"referenceToken\":\"H0IKSH\"}"

Scheduling immediate update of bundle failed. Something went wrong. Please retry or contact the service provider and provide the reference token.

Going to Bundle Management in SDDC Manager gave me the following error message:

Retrieving available bundles failed. Unable to retrieve aggregated domains upgrade status: Encountered fetching http://127.0.0.1/lcm/inventory/upgrades api - No enum constant com.vmware.evo.sddc.lcm.model.bundle.BundleSoftwareType.MULTI_SITE_SERVICE

Retrieving all applicable bundles failed. Encountered fetching http://127.0.0.1/lcm/inventory/upgrades api - No enum constant com.vmware.evo.sddc.lcm.model.bundle.BundleSoftwareType.MULTI_SITE_SERVICE

Fortunately my colleague Erik G. Raassum had blogged about this issue the day before: https://blog.graa.dev/vCF510-Upgrade

The solution was to follow VMware KB94760 and Delete all obsolete bundles.

Next up was that the NSX Precheck failed with the following error message:

NSX Manager upgrade dry run failed. Do not proceed with the upgrade. Please collect the support bundle and contact VMWare GS. Failed migrations: Starting parallel Corfu Exception during Manager dry-run : Traceback (most recent call last): File "/repository/4.1.2.1.0.22667789/Manager/dry-run/dry_run.py", line 263, in main start_parallel_corfu(dry_run_path) File "/repository/4.1.2.1.0.22667789/Manager/dry-run/dry_run.py", line 150, in start_parallel_corfu subprocess.check_output([str(fullcmd)], File "/usr/lib/python3.8/subprocess.py", line 415, in check_output return run(*popenargs, stdout=PIPE, timeout=timeout, check=True, File "/usr/lib/python3.8/subprocess.py", line 516, in run raise CalledProcessError(retcode, process.args, subprocess.CalledProcessError: Command '['python3 /repository/4.1.2.1.0.22667789/Manager/dry-run/setup_parallel_corfu.py']' returned non-zero exit status 255.

I digged into the logs without finding anything helpful. I started thinking what the technical geniuses at The IT Crowd would do, so I rebooted all NSX Manager nodes and tried the upgrade again. This time the precheck succeeded for NSX Manager, but it failed for the NSX Edge Nodes with the following error message:

nkk-c01-ec01 - Edge group upgrade status is FAILED for group 3373386e-5c41-4851-806d-76f0841a5a7d nkk-c01-en01 : [Edge 4.1.2.1.0.22667789/Edge/nub/VMware-NSX-edge-4.1.2.1.0.22667799.nub download OS task failed on edge TransportNode aa134203-1446-4c65-b17f-41c60e325d55: clientType EDGE , target edge fabric node id aa134203-1446-4c65-b17f-41c60e325d55, return status download_os execution failed with msg: Exception during OS download: Command ['/usr/bin/python3', '/opt/vmware/nsx-common/python/nsx_utils/curl_wrapper', '--show-error', '--retry', '6', '--output', '/image/VMware-NSX-edge-4.1.2.1.0.22667799/files/target.vmdk', '--thumbprint', '7aa5bae4a6eddf034c42d0fb77613e9212fec19a7855a8db0af37ed71c3fe7f6', 'https://nkk-c01-nsx01a.cybernils.net/repository/4.1.2.1.0.22667789/Edge/ovf/nsx-edge.vmdk'] returned non-zero code 28: b'curl_wrapper: (28) Failed to connect to nkk-c01-nsx01a.cybernils.net port 443: Connection timed out\n' .].

A quick status check of all the Edge Nodes didn’t help, so I went ahead and rebooted them all and tried the upgrade again. This time all prechecks went well and the upgrade was also successful without any further issues.

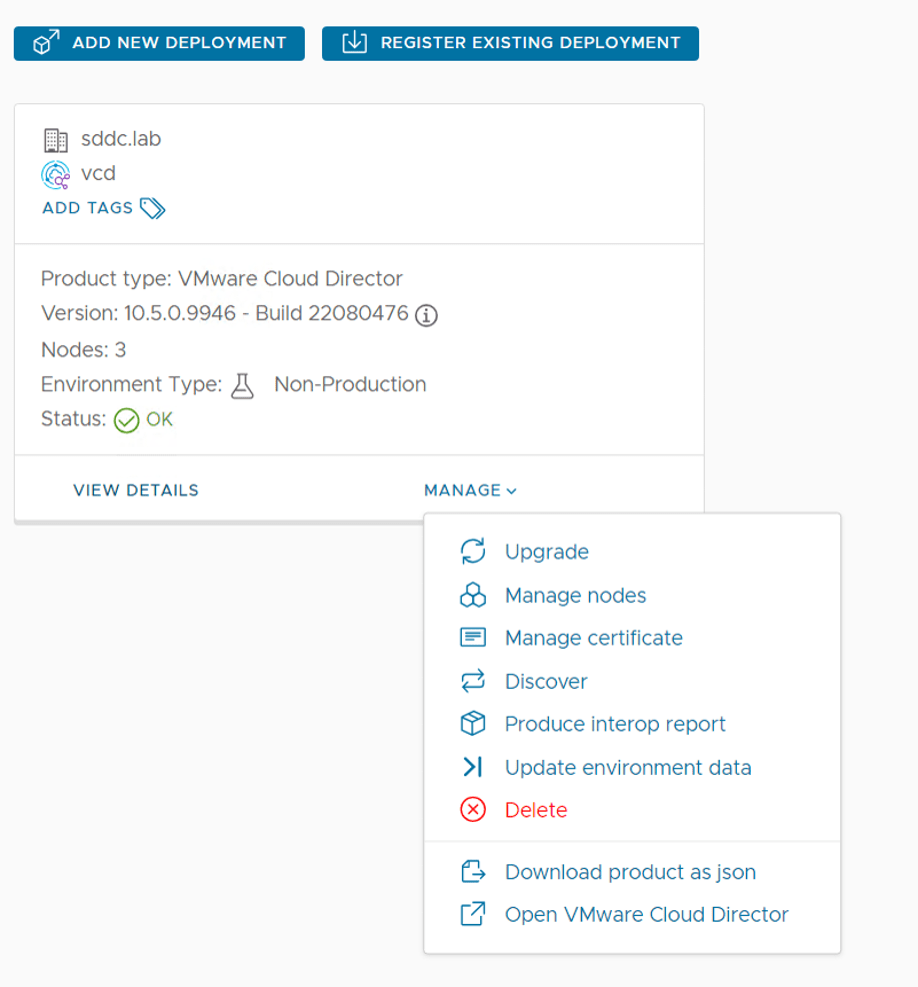

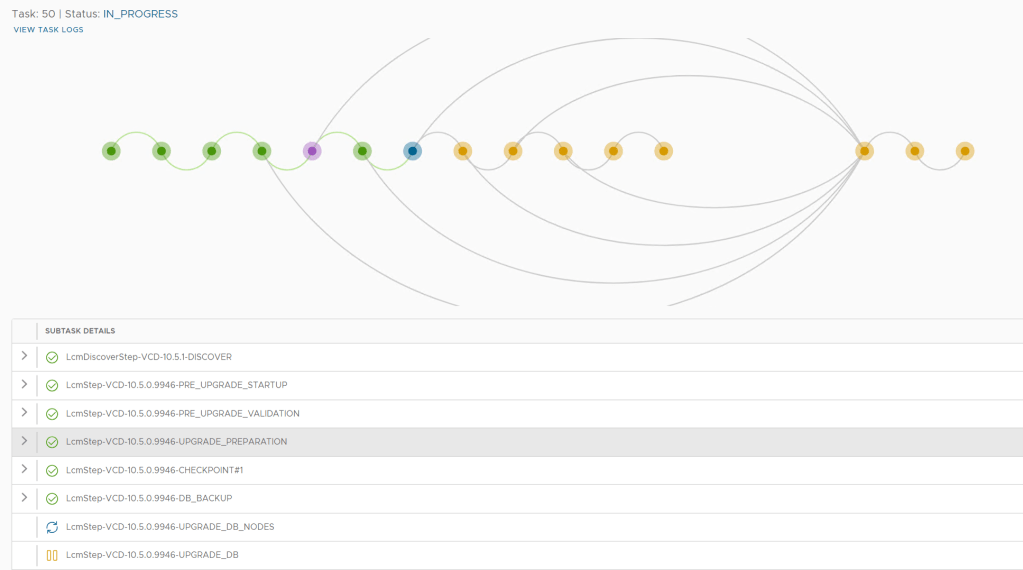

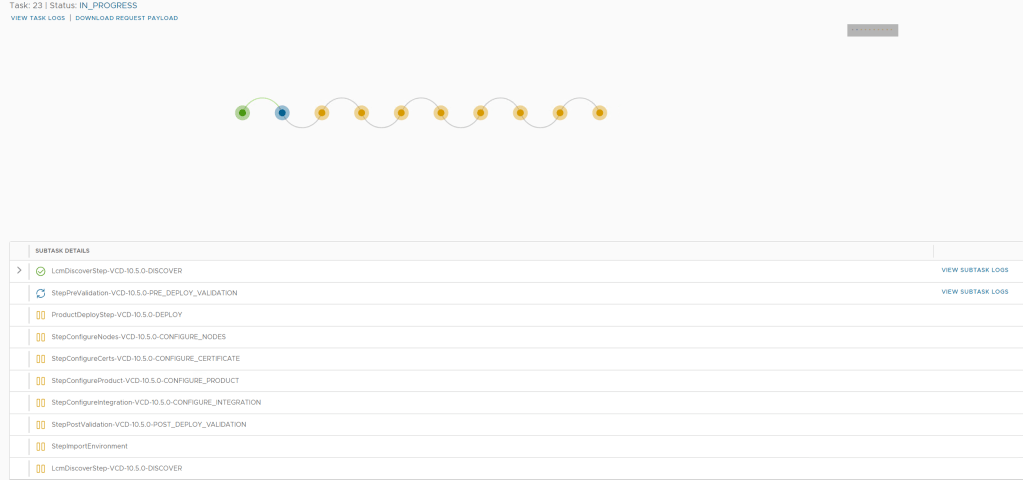

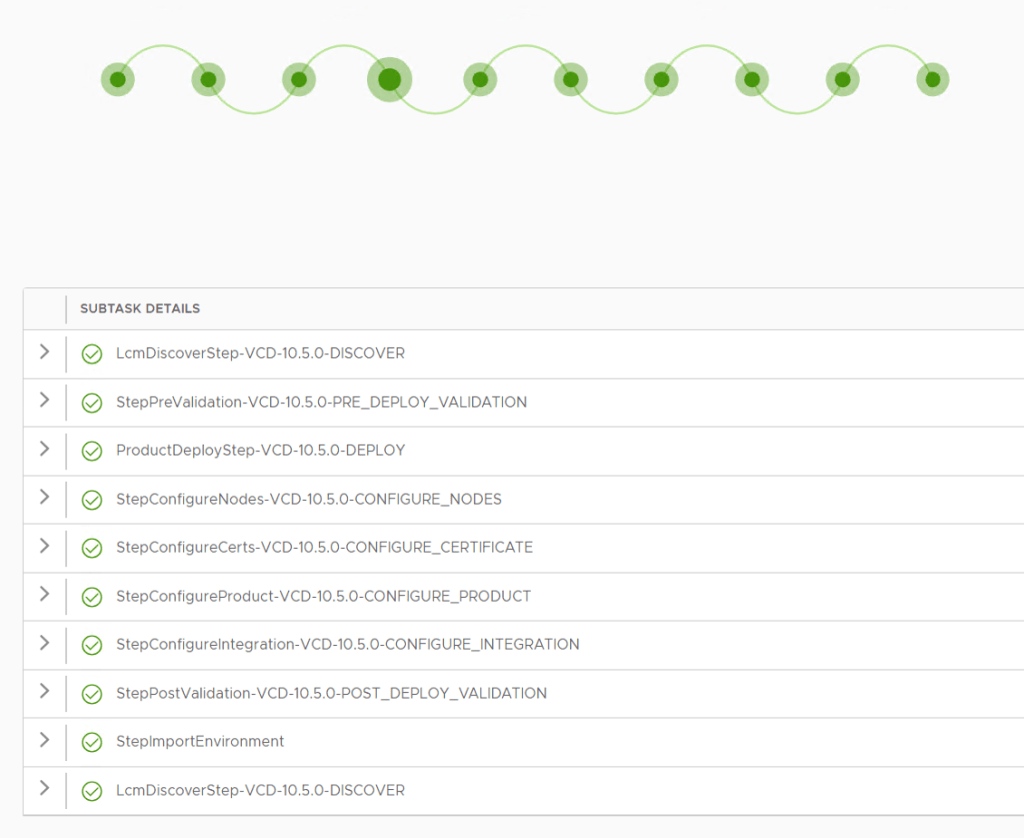

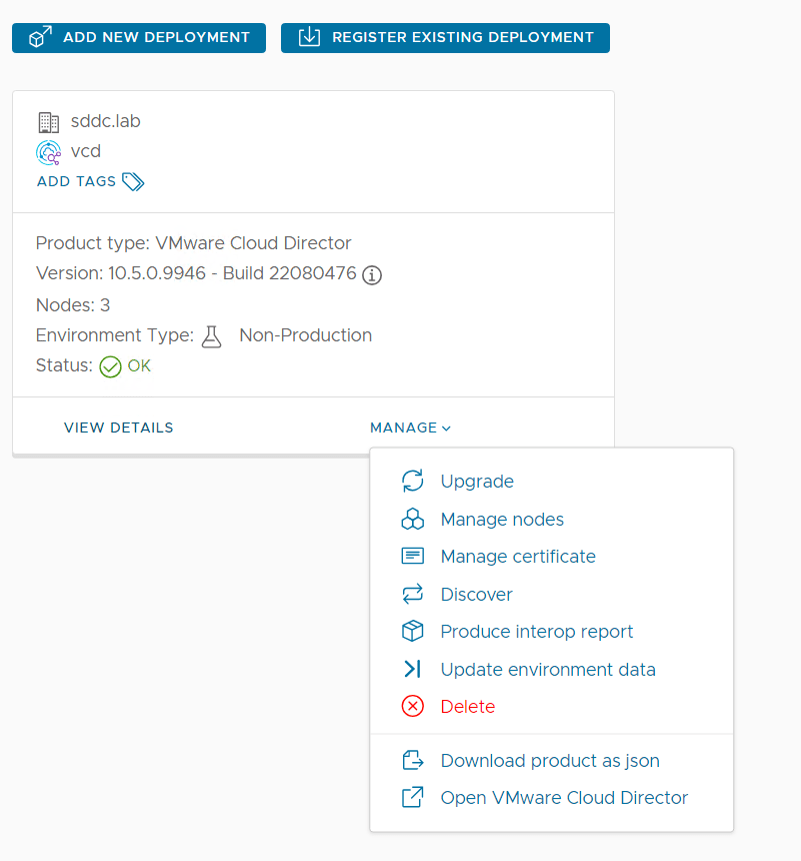

I also ran into a few issues while upgrading the Aria Suite. After upgrading VMware Aria Suite Lifecycle from version 8.12 to 8.14.1, the Build and Version numbers were not updated even though the upgrade was successful. This was resolved by following VMware KB95231.

When trying to upgrade Aria Operations for Logs to version 8.14.1, I got the following error message:

Error Code: LCMVRLICONFIG40004

Invalid hostname provided for VMware Aria Operations for Logs.

Invalid hostname provided for VMware Aria Operations for Logs import.

This was fixed by removing the SHA1 based algorithms and SSH-RSA based keys usage from the SSH service on VMware Aria Operations for Logs following VMware KB95974.

After upgrading Aria Operations to version 8.14.1, I kept getting the following error message over and over again:

Client API limit has exceeded the allowed limit.

Following VMware KB82721 and setting CLIENT_API_RATE_LIMIT to 30 solved this.

Quite a troublesome upgrade, but at least most of the problems were fixed quickly by either turning something off and on again, or following a KB.